You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

ControlBooth

General

- Threads

- 1K

- Messages

- 10.1K

Industry Announcements & Press Releases

Announcements and Press Releases from the Industry.

*Must be an Advertiser or have an upgraded membership to post.

- Threads

- 202

- Messages

- 733

- Threads

- 202

- Messages

- 733

Featured Articles & Interviews

Featured Articles and Interviews from the Industry.

- Threads

- 57

- Messages

- 136

- Threads

- 57

- Messages

- 136

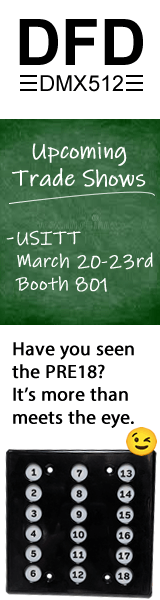

Conventions

Forums for Industry Conventions, LDI, USITT, PLASA, etc.

- Threads

- 329

- Messages

- 3.3K

Sub-forums

Sub-forums

- Threads

- 329

- Messages

- 3.3K

Question of the Day

Question of the Day (or week) provided by the senior staff of ControlBooth.com (If you have suggestions for future questions, please PM a Moderator or Senior Team member!)

- Threads

- 369

- Messages

- 5.8K

- Threads

- 369

- Messages

- 5.8K

Education and Career Development

A forum for discussion on college selection, class feedback, teaching tips, as well as resumes, preparing for job interviews and career development.

- Threads

- 884

- Messages

- 8.4K

- Threads

- 884

- Messages

- 8.4K

CB Classifieds

Must have 15 or more posts to create a classified ad. (or be a small business or corporate member to post jobs)

- Threads

- 759

- Messages

- 2.6K

- Threads

- 759

- Messages

- 2.6K

General Advice

General tips, tricks, and rules that every technician should know.

- Threads

- 1K

- Messages

- 14K

- Threads

- 1K

- Messages

- 14K

New Member Board

Start here and post a hello to the ControlBooth.com community!!

- Threads

- 3.8K

- Messages

- 16.9K

- Threads

- 3.8K

- Messages

- 16.9K

- Threads

- 154

- Messages

- 188

CB Newsletters

Copies of the CB Newsletter, if you want to sign up, click here and fill out the popup.

- Threads

- 78

- Messages

- 78

- Threads

- 78

- Messages

- 78

CB Discussions

Lighting and Electrics

For any discussions related to lighting and general electricity.

- Threads

- 15.2K

- Messages

- 171.3K

- Threads

- 15.2K

- Messages

- 171.3K

Sound, Music, and Intercom

A place to discuss sound reinforcement and design, music, and audible communication.

- Threads

- 4.7K

- Messages

- 50.8K

- Threads

- 4.7K

- Messages

- 50.8K

Stage Management and Facility Operations

From calling cues, to giving notes to actors, to putting down glow-tape. Having a hard time getting that funding you need? Have a good suggestion for a fundraiser? All about your theatre, discuss design, layouts, maintenance, repair, safety concerns, remodel ideas, new building designs, and of course, photos of your facility!

- Threads

- 1.3K

- Messages

- 18.4K

- Threads

- 1.3K

- Messages

- 18.4K

Scenery, Props, and Rigging

Can't figure out how to design or build that set or prop just so? Post your questions or tips and tricks here!

- Threads

- 2.8K

- Messages

- 28.6K

- Threads

- 2.8K

- Messages

- 28.6K

Special Effects

Can't figure out how to wow and amaze the audience or just trick them into thinking it's the real thing? Post your questions here! (including atmospherics and magic)

- Threads

- 1.1K

- Messages

- 10.1K

- Threads

- 1.1K

- Messages

- 10.1K

Multimedia, Projection, and Show Control

A place to discuss all aspects of video, multimedia, projection, and show control in theatre and other events.

- Threads

- 1.4K

- Messages

- 11.3K

- Threads

- 1.4K

- Messages

- 11.3K

Costumes and Makeup

Discuss costuming and makup and any technical issues surrounding the art of creating a character.

- Threads

- 92

- Messages

- 567

- Threads

- 92

- Messages

- 567

- Threads

- 682

- Messages

- 10K

Technical Theatre History

For discussions about the history about any aspects of Technical Theatre.

- Threads

- 83

- Messages

- 799

- Threads

- 83

- Messages

- 799

- Categories

- 2

- Pages

- 3,114